Hi Everyone,

Hoping you’re well.

Before we begin, two quick notes:

I’m sending this newsletter a week later than usual—apologies—as I have been focused on launching the paperback of A Year in the Art World in the U.S. Again, I hope you will check it out if you have not yet.

Secondly, last week this newsletter reached 1,000 subscribers, so I wanted to thank you all for your continued interest. I’m sharing the graph above so you can see its growth since last November, and to be transparent about things like this. (As well, you can see the time I took off from this project between the start of the pandemic and my Meta layoff :), to show that life often doesn’t move in a linear fashion). I also wanted to ask, especially for those newer subscribers, to share feedback. Especially if you think of something that could fit into the concerns of this newsletter, or have a question you think I could answer well here, reach out to me and suggest it, either via a comment or my website.

OK, so that’s my preamble…

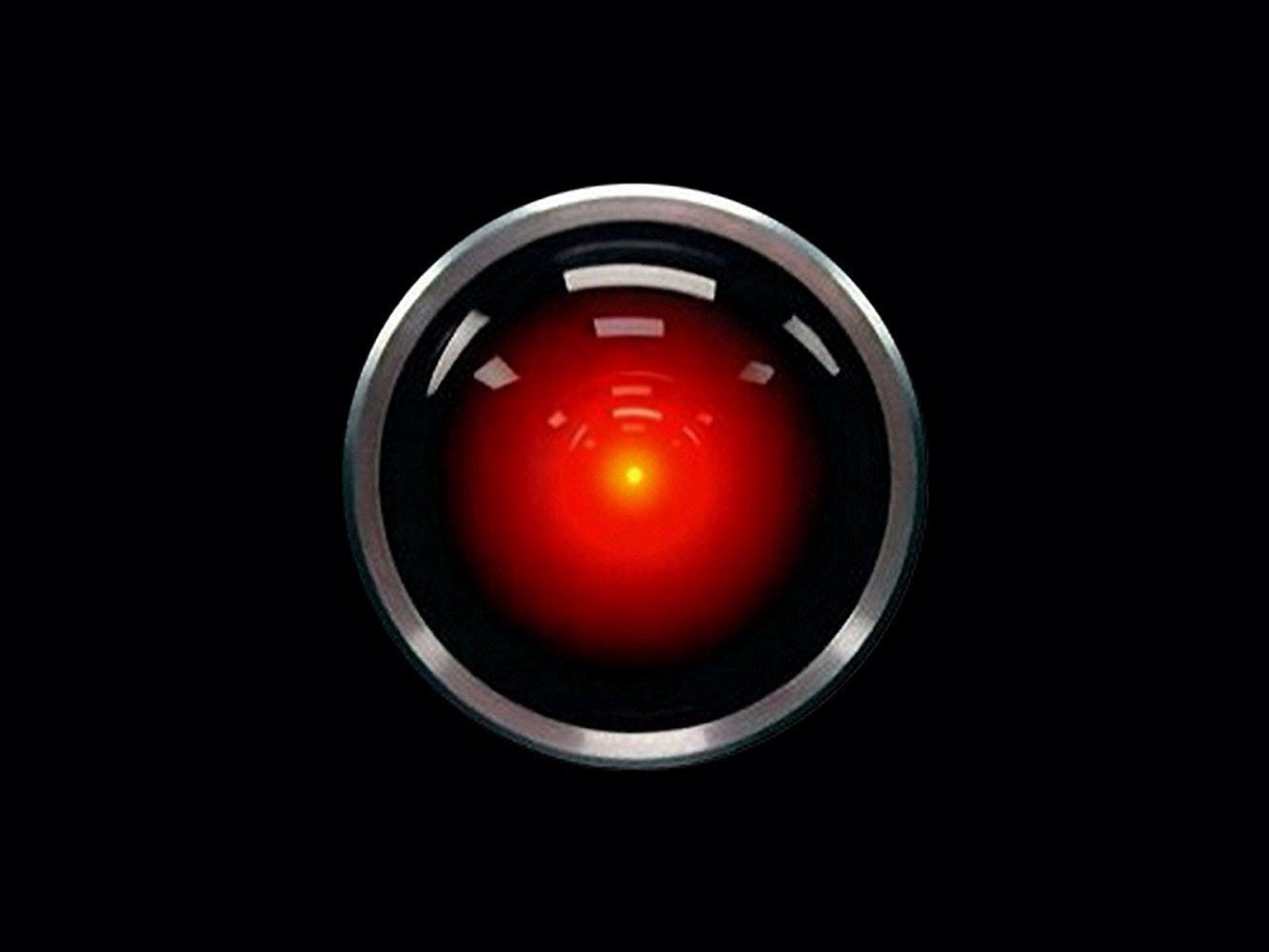

This week, I wanted to focus on one thing again, and gather some of my thoughts regarding AI and art, especially in the wake of having recently heard a talk by Gary Marcus, who informs much of my thinking below. Again, I am doing this because of how important I believe it is to be up-to-speed on this crucial issue.

Marcus is a scientist (Emeritus Professor of Psychology and Neural Science at NYU), a best-selling author, a serial entrepreneur, and a leading voice in AI. You can read more about him on his personal website (and later on I’ll share some talks he has given).

Marcus believes AI will drastically increase the amount of defamation, disinformation, spam, fake books, fake people (cloned voices, for one) online—and create nostalgia for (“pure,” “real,” somewhat true) Internet data pre-2021 because of this.

You can see the seeds of this trend in the explosion of ChatGPT outputs—which, as I have discussed previously and hope you know by now—are not designed to tell the truth necessarily and thus spread vast amounts of misinformation (even though they do it in a way that brilliantly mimics human speech and writing).

Marcus also talks about AI's risks to the financial markets and elections. For example, what happens if the Internet is flooded with thousands of convincing pictures and accounts of the Pentagon exploding? At least someone could use this as an opportunity to short the market, for example, because of the high probability it will prompt some market response. Now, imagine something like that happening every day.

Marcus is optimistic about regulation, such as the recent Future of Life Open Letter and the prospect of international governance over AI, even if he knows it will be a highly complex endeavor. He says that there is a general consensus that we dropped the ball in regulating social media and that it won’t happen the same way with AI. He also sees promise in the models of regulatory systems like the U.S. Food and Drug Administration (FDA). At the same time, he acknowledges that no new laws have been passed yet here or in Europe. And he says we don’t have any good responses yet to the aforementioned risks. He’s also concerned about “regulatory capture,” or that regulatory agencies may be governed by the interests they regulate versus the public because the government is only meeting with Big Tech.

Part of regulation, according to Marcus, includes (as a start) full transparency around what AIs are being trained on (something I have called attention to recently). Open AI, for example, has not actually been open for the last two and a half years. While its usage is going down from when it first launched, it’s still shaping the lives of 180 million users per month, and Open AI won’t tell us about how it works.

Marcus also suggests having scientists be in the loop so we can create something like a center for the advancement of trustworthy AI.

If you’re interested in learning more about the risks of AI, I suggest (as a start) checking out one or all of the following videos featuring Marcus:

So, how does this all relate to the art world?

I have a number of thoughts:

As one of the principle community for images, the art world should take a significant stand in terms of image-generation tools. For example, we should be involved in demanding to know what they have been trained on and how they work. In the blink of an eye these tools might be incredibly powerful and dominant and because of our slowness or the fuzziness around copyright and AI, we might already be losing our chance to define or redefine authorship, uniqueness, and copyright of artworks in the age of AI. I also hope that the recent implosion of the NFT market won’t stop art world types from engaging with another tech subject, which already seems to be much more influential than NFTs.

We should already be having conversations about what parts of the art world might be using AI, other than image generation tools. For example, is AI going to be used as a marketing tool for galleries or auction houses? Is AI going to be used to create gallery press releases; automatically match new artworks with potential clients; or for art-historical research for authentication (much more than it already has started to be)? Will AI be used in scholarship? As someone who has helped built such AI tools in the past, e.g. Artsy’s Art Genome Project, I’m very aware of the inherent risks involved, the high prospect of errors, and the reality that many of the AIs currently “automatically” functioning still necessitate human oversight.

While the drastic rise and fall of NFTs makes us less optimistic about how AI might be integrated into art creation, we need to start having serious conversations to learn how AI is affecting it, even just to educate ourselves. Designers and ad agencies are already using it as an image-creation tool, and a way to generate vast amounts of unique images, and some are becoming quite adept at prompting the image generators, and (as I said recently) making prompting a new art form. It would seem advantageous to artists to embrace this new skillset now, versus waiting until other fields or non-professional artists become far better at it than artists, and since I believe artists will inherently create better outputs.

Finally, the art world needs to realize AI is just focusing on certain intelligences and be engaged in promoting the intelligences that, in Marcus’s view, can’t be generated through AI: critical thinking and creativity. In this respect, it also needs to interrogate certain recent claims that AI scores high marks in creativity because it can ace creativity tests.

Well, above is a start, and that’s it for this week, and apologies to those of you looking for topics other than AI :). I’ll return to those next week but please trust my attention to this as (again) I truly believe the repercussions will be massive.

Best,

Matthew

You lost me on embracing it as an artist. The moment it came out these AI programs were trained on the backs of artists without payment, licensing or credit I was done fiddling with it. Now people want to be able to pass Ai generated images off as art without talking about where they made them. Been in heated debates on Instagram with people who refuse to understand how artists label their artwork. I would guess they don’t want to say it’s an Ai generated image because then they’d have to think about the artists who really created the work.

A topic close to my heart. Thank you for covering it.